Fakeout

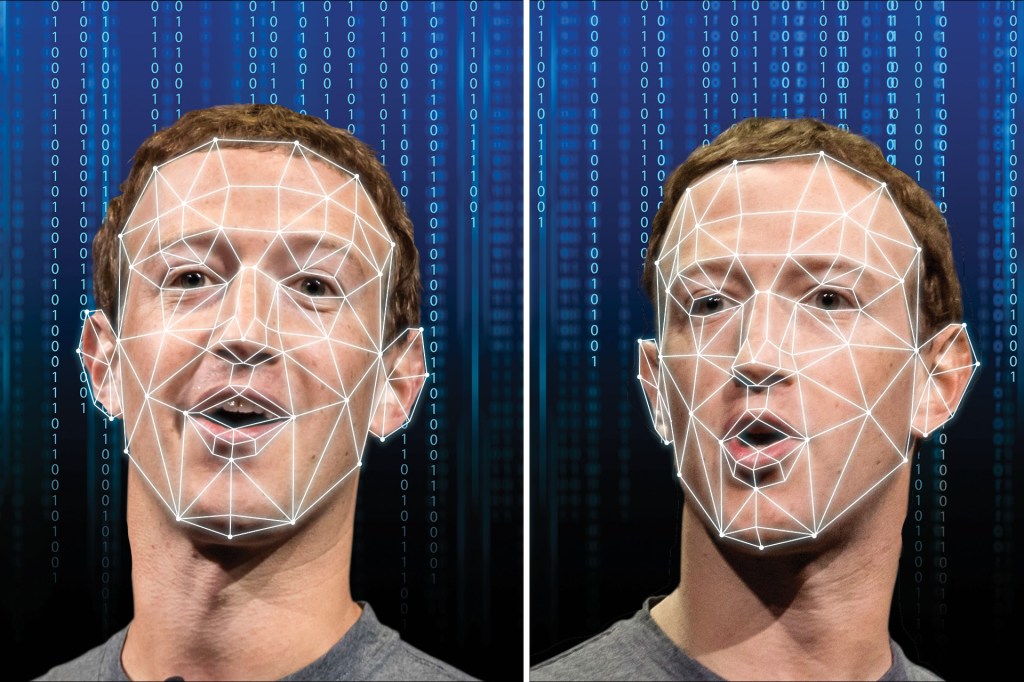

Deepfakes are videos with visual or audio content that has been manipulated

manipulate

WESTEND61—GETTY IMAGES

to intentionally change something

(verb)

Using his computer program, Arthur manipulated the photo to make it look brighter.

. They make it seem as if the video’s subject is saying words he or she hasn’t actually spoken. In the past, videos like these could be made only by trained special-effects artists or video editors. Now, anyone with the time and the right tools can make a convincing deepfake.

WESTEND61—GETTY IMAGES

to intentionally change something

(verb)

Using his computer program, Arthur manipulated the photo to make it look brighter.

. They make it seem as if the video’s subject is saying words he or she hasn’t actually spoken. In the past, videos like these could be made only by trained special-effects artists or video editors. Now, anyone with the time and the right tools can make a convincing deepfake.

These videos are dangerous. Many people are worried that deepfake technology will be used to trick the public. Imagine if someone made a deepfake of a world leader or other powerful person, then uploaded it to the Internet. How would people know they could believe what they were seeing and hearing?

Wael Abd-Almageed is trying to answer that question. He leads a team of five other researchers at the University of Southern California. To do his work, Abd-Almageed received a grant from the Defense Advanced Research Projects Agency. The agency is part of the United States Department of Defense.

Abd-Almageed and his team designed computer software that can determine whether a video is a deepfake. It uses artificial intelligence to search the video for clues that the subject’s face has been manipulated.

According to Abd-Almageed, deepfakes often contain mistakes that his team’s technology can detect. “If there is inconsistency

inconsistency

KATARZYNA BIALASIEWICZ—GETTY IMAGES

the state of not belonging or of containing something out of place

(noun)

The police noticed inconsistency between the two witnesses' statements.

in the video, such as how the eyes and mouth move, we can spot it,” he told TIME for Kids.

KATARZYNA BIALASIEWICZ—GETTY IMAGES

the state of not belonging or of containing something out of place

(noun)

The police noticed inconsistency between the two witnesses' statements.

in the video, such as how the eyes and mouth move, we can spot it,” he told TIME for Kids.

Don’t Be Fooled

How can you avoid being fooled by a deepfake? Abd-Almageed advises not immediately believing what you see online. Instead, make sure to research a video first. “Don’t take anything on the Internet for granted,” he warns. “Ask yourself, ‘Would this person actually say something like this?’” Look into the video’s source, too. Who made it? Who posted it?

Abd-Almageed also suggests watching a video at a slower speed so you can spot inconsistencies. This is possible using the settings on most popular video platforms, including YouTube. But he believes that, as deepfakes become more advanced, equally advanced technology must be created in response. “Every day, someone will create a better deepfake,” Abd-Almageed says. “We have to try to detect it.”